An Automated Voice Calling Chatbot using Amazon Lex, Amazon Connect, Amazon Polly and AWS Lambda!

But why?

In any client sourcing business there are scenarios where the employees have to make monotonous calls which kills their productivity to do more focussed work that adds value to the business, the aim of this project is to directly address this issue so that these monotonous calls can be automated via a chatbot and the business can direct these employees into more focussed work.

Another issue with a client sourcing business in its infancy is that there is a limited number of employees that perform these monotonous calls, but when these calls are shifted over to a bot the scale can be increased and the business isn’t bottlenecked by a set number of employees.

How was this achieved?

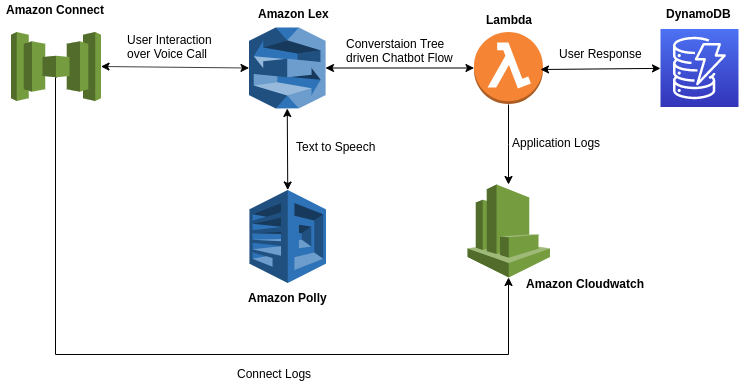

This was achieved by using AI-enabled Amazon Web Services such as Amazon Lex and Amazon Polly, a contact center service called Amazon Connect and a Serverless Function (Because who wants to pay for idle time, right?). Wondering what these are, you’ll have to wait for that, but first, let me give you an overview of the architecture used below,

The above architecture might feel daunting at first but in reality, it’s as straight forward as it can get, so now let me break down the flow by giving a brief overview of these Amazon Services.

Amazon Connect

As Amazon describes it, it is an omnichannel cloud contact center, how it’s beneficial for this project is that it comes with out-of-the-box integrations with Amazon’s AI-enabled Services like Amazon Lex and Amazon Polly.

To get started, one has to create a Contact Flow in which you have multiple ways of handling user’s input, one of which is Amazon Lex whereby you can integrate your Amazon Lex chatbot with the contact flow and all the user input is passed over to bot for it to reply to the user’s responses.

Amazon Lex

Amazon Lex is AWS’s AI-enabled service for creating conversational interfaces that are driven by built-in functionalities such Automatic Speech Recognition (ASR) for converting speech to text and Natural Language Processing (NLP) for classifying user’s intent, all of these are powered by deep learning technologies that also power Amazon Alexa.

The conversational interfaces that are created using Amazon Lex can also optionally be driven by AWS Lambda Serverless Functions. This is also what I’ve used in this project to drive our chatbots.

Amazon Lex also comes integrated with Amazon Polly. More on this below.

Amazon Polly

Amazon Polly is AWS’s AI-enabled service for converting text to lifelike speech. Why I say lifelike speech is because it uses deep learning technology in its Text-to-Speech (TTS) engine so that the speech to text synthesis actually sounds like a human voice.

It also has Neural Text-to-Speech (NTTS) voices which sound even more lifelike as they are better at synthesizing voices that have improved speech quality.

For this project, however, I have made use of the standard TTS engine which offers voices in varied accents. I’ve made use of English (India) voices for better relating to our target audience and it works like a charm.

The text passed over to Amazon Polly is also configurable, one can modify the speech-rate, provide emphasis to certain parts of the text and do a lot more things, all of this is possible via the use of Speech Synthesized Markup Language (SSML).

AWS Lambda

This is the final piece of the puzzle, I’ve made use of AWS’s Serverless Framework called AWS Lambda to drive the logic in our Lex Bot. The logic here is driven by the use of a custom made tree structure that stores the conversation flow of the bot and based on the current user context decides what the next set of actions and responses will be.

This Lambda function is used as a validation hook on our Lex Bot so that each and every conversation is passed over to the custom logic. More on this in the latter part of this series.

Other AWS Services

I’ve also made use of some other AWS services such as AWS DynamoDB as a database store and AWS Cloudwatch for application logging and monitoring.

OK! I get what these services are, but how do they work together?

So now that you understand what each service is used for, let me give you an example of how this architecture works.

Let’s say the business wants the gauge the interest of users in performing a certain task, let’s see how that’d work.

- The system would call the user on their designated phone number (This call is made by Amazon Connect).

- Once the user picks the call, Amazon Connect will pass over the user’s context to the defined contact flow so that the user can then start conversing with the bot.

- The Amazon Lex bot will now receive the user’s speech and convert the user’s speech into text and pass it over to the Lambda Function that drives the chatbot logic.

- The AWS Lambda function will now understand the user’s context and accordingly will perform certain actions and elicit back a response in text.

- This text will then be converted to speech using Amazon Polly and passed over to Amazon Connect to be relayed over to the user.

With every user response, the flow will repeat from 2. to 5.

How does the business benefit?

Well to answer this let me take you back to the initial problem statement, the two basic foreseeable challenges that I’ve tried to solve were automating monotonous voice calls and secondly scaling the agents that could make such calls.

Both the challenges were solved, firstly by driving the monotonous conversation with the use of a chatbot and secondly by making these calls using a cloud contact center which scales with its usage.

On top of that, a lot of cost optimizations were also achieved as if you would have noticed all the above services work on the pay-as-you-use model and hence you are only get charged when these services are in use and you do not pay for the idle time.

Where can I find a more detailed view of this implementation?

In the coming weeks, I will be posting more blogs in this series to detail how I made use of each service.